Somewhere right now, a music teacher has 34 student composition projects open in 34 browser tabs.

They arrived as PDFs. A few came as photos of handwritten manuscripts. Two are voice memos. One student submitted a link to a Google Doc containing a screenshot of notation software that the teacher does not have installed. The assignment was due four days ago. The teacher has been awake since 6am and it is now 10pm on a Thursday.

This is not a story about one teacher. It is the story of music composition grading at scale – and it is happening in schools everywhere, every semester, because composition is one of the most powerful things we can assign students and also one of the hardest things we can possibly assess.

This post is about fixing that. Not theoretically. Practically. With a rubric framework that works, a workflow that does not consume your evenings, and a clear explanation of how modern tools have changed what is possible for music teachers who grade composition at scale.

Why Grading Composition Is Uniquely Hard (And Why Generic Advice Does Not Help)

Search "how to grade music composition assignments" and you will find a lot of rubric PDFs.

What you will not find is an honest conversation about the real problem, which is not rubric design. Most music teachers already know what good composition looks like. The problem is the operational reality of assessing it.

Consider what grading a composition assignment actually involves at scale:

You are not just evaluating one score. You are evaluating 30, or 60, or 90 scores, each submitted in a different format, through a different channel, at a different time. You are trying to distinguish between a student who genuinely understood harmonic motion and one who got lucky. You are writing feedback specific enough to be useful while fast enough to return before the moment has passed. You are doing this on top of rehearsals, performances, meetings, and every other demand of a music teacher's actual job.

The rubric is the easy part. The workflow is the hard part.

And the workflow, for most music teachers, is broken.

The Real Problem: Composition Grading Has a Logistics Crisis

Here is what happens when a music teacher assigns a composition project without a purpose-built system.

Students submit work through whatever channel they have available. Google Classroom comment threads fill with shared links. Some links are broken. Some students accidentally share the wrong file. Some have not enabled sharing permissions. A few submit PDFs of handwritten manuscripts that are barely legible. Someone emails you a photo taken at an angle in bad lighting. One student, bless them, records themselves humming the melody into their phone.

The teacher downloads what they can, opens what opens, and begins assessing. Feedback is written in a separate document, referencing measure numbers that the student will have to cross-reference manually. Or it is typed into a Google Form. Or written on the PDF in annotation software, the student may not be able to open.

When feedback finally reaches students – often a week or more later – most of them have already moved on mentally. The connection between the work they did and the feedback they are receiving has decayed. The feedback lands with less force than it should.

Meanwhile, the teacher has spent hours on logistics that have nothing to do with music education.

This is not a rubric problem. This is a systems problem.

What Good Composition Assessment Actually Looks Like

Before getting to tools, it helps to be clear about what we are trying to achieve. Because the goal of grading a composition assignment is not a number in a gradebook. It is a feedback loop that makes the next composition better.

That means good composition assessment has three qualities:

It is specific. "Good work" teaches nothing. "Your suspension in bar 6 resolves downward correctly, but the approach note on beat 4 creates a parallel fifth with the bass – look at beat 3 and see what you can adjust" teaches something a student can act on.

It is timely. Feedback that arrives ten days after submission lands in a completely different cognitive context than feedback that arrives two days after submission. The student's mental model of the piece has already shifted. Specificity matters less if it arrives too late to be useful.

It separates the objective from the subjective. A composition assignment typically contains both: objective questions (did you use the required intervals? Is the form correct? Are the voice leading rules observed?) and subjective ones (is the melody memorable? Does the harmonic rhythm support the melodic contour?). Good assessment addresses both layers – but it addresses them differently. The objective layer can and should be checked quickly. The subjective layer is where teacher expertise matters most.

Most grading systems collapse these two layers together, which means teachers spend time on binary right/wrong checking that could be automated, leaving less time for the interpretive feedback that only a trained musician can provide.

A Rubric Framework That Actually Works

Before we get to tools, here is a rubric structure built for music composition that works across grade levels with minor adjustments.

The 5-Category Composition Rubric

1. Technical Accuracy (Objective)

Does the composition follow the stated rules of the assignment? This includes voice leading requirements, interval restrictions, cadence types, key signature usage, time signature adherence, required range, and instrumentation. This is the auto-gradable layer. Binary, checkable, not subjective.

2. Structural Integrity

Does the composition have a clear sense of form? For younger students this might mean ABA structure or a clear phrase ending. For more advanced students it might mean sonata exposition or through composed logic with motivic development. The question is whether the piece has intentional architecture or is merely a sequence of notes.

3. Melodic Quality

Is there a sense of direction and arrival? Does the melody breathe – alternating between tension and resolution? Does it avoid excessive repetition or aimless chromaticism? This is the most subjective category and the one where specific, measure-referenced feedback makes the biggest difference.

4. Harmonic Awareness

Do the chord choices support the melody? Does the harmonic rhythm feel appropriate to the tempo and character? Are there moments of harmonic interest beyond the purely functional? For advanced students: is there a moment where the harmony does something surprising and earned?

5. Creative Risk and Voice

This is the category that separates an assignment that followed instructions from a composition with a perspective. Did the student make a choice that was not required – and did it work? Credit for calculated risk, for a moment of unexpected color, for a decision that reflects a genuine musical personality beginning to form.

How to Weight the Categories by Grade Level

The same five categories apply from middle school through undergraduate level. What changes is the weighting.

Middle School (Grades 6-8)

Technical Accuracy: 40%

Structural Integrity: 25%

Melodic Quality: 20%

Harmonic Awareness: 10%

Creative Risk: 5%

Students at this level are building foundational craft. The objective requirements are the primary teaching target. Creative risk is acknowledged and rewarded but not heavily weighted, because the foundations are not yet stable enough to make risk fully intentional.

High School (Grades 9-12)

Technical Accuracy: 25%

Structural Integrity: 25%

Melodic Quality: 20%

Harmonic Awareness: 20%

Creative Risk: 10%

By high school, students are expected to internalize technical requirements well enough that they are no longer the primary point of assessment. The balance shifts toward expressive and harmonic choices.

Advanced / AP / Undergraduate

Technical Accuracy: 15%

Structural Integrity: 20%

Melodic Quality: 20%

Harmonic Awareness: 25%

Creative Risk: 20%

At this level, technical accuracy is assumed. The real assessment is compositional judgment – harmonic sophistication and the courage to make choices that are not just safe.

The Tool Problem: Why Your Current Workflow Is Costing You Hours

Good rubric design is a solved problem for most experienced music teachers. The actual bottleneck is the workflow around submission, review, and feedback.

Here is what a manual composition grading workflow costs a teacher with three sections of Music Theory, 90 students total, one composition project per month:

- Submission collection: 20-30 minutes opening files, chasing broken links, converting formats

- Per-composition review: 8-15 minutes depending on complexity and feedback depth

- Feedback delivery: 5-10 minutes writing and returning comments through a separate channel

- Total per assignment cycle: 13-21 hours per month

At the higher end of that range, you are spending more than half a working week on one assignment type. That is before you calculate what happens when submissions arrive late, formats are incompatible, or students cannot access the feedback you sent.

This is why composition projects often become semester-end events rather than regular practice. Not because teachers do not value composition. Because the logistics make frequent assessment unsustainable.

What Changes When You Have the Right Platform

This is where the story of a real teacher becomes useful – not as a testimonial, but as a data point.

Sarah Chen is a middle school music director managing 94 students across three sections. Before moving to a purpose-built platform, her composition assignment cycle looked like the one described above: submissions in incompatible formats, feedback in disconnected documents, turnaround averaging nine days.

Nine days. Between submission and the moment feedback reached a student.

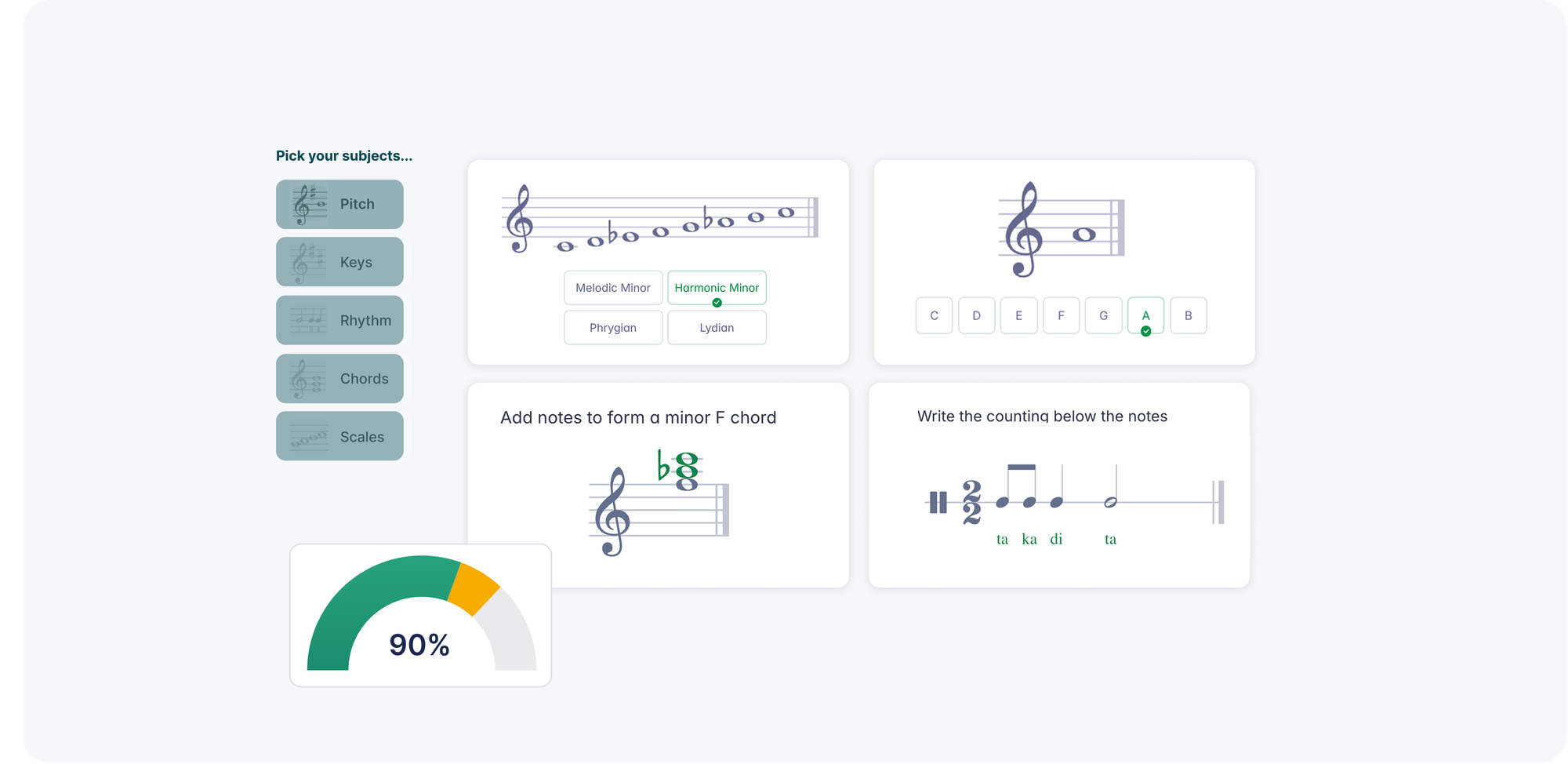

After moving to Flat for Education, her feedback turnaround dropped to two days. Not because she worked faster. Because the logistics that had been eating her time disappeared.

Here is what changed structurally:

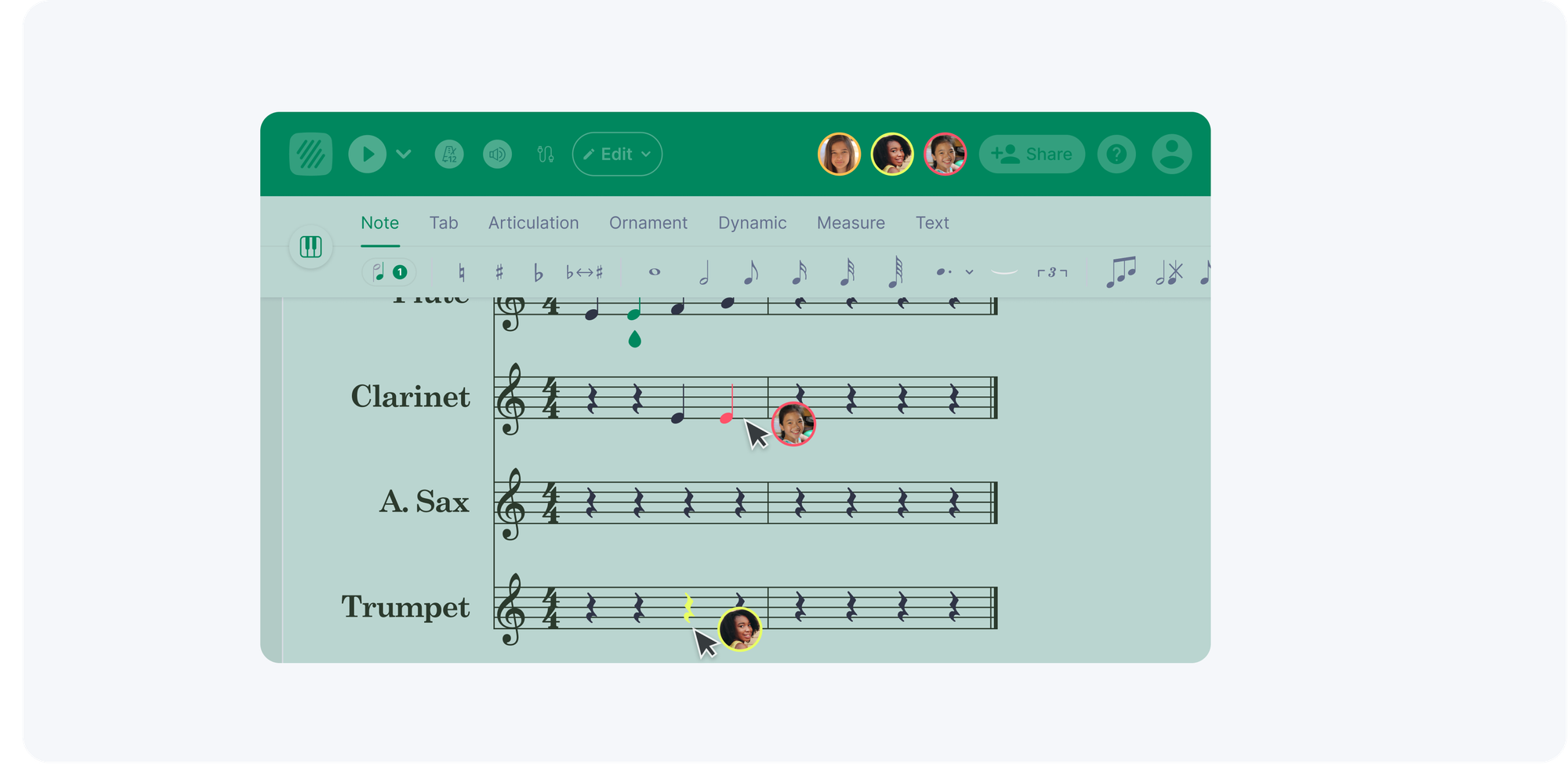

Students composed inside the platform rather than in disconnected tools. Every submission arrived in the same format, in the same place, with the student's name attached and organized by class. No downloads. No format conversion. No broken links.

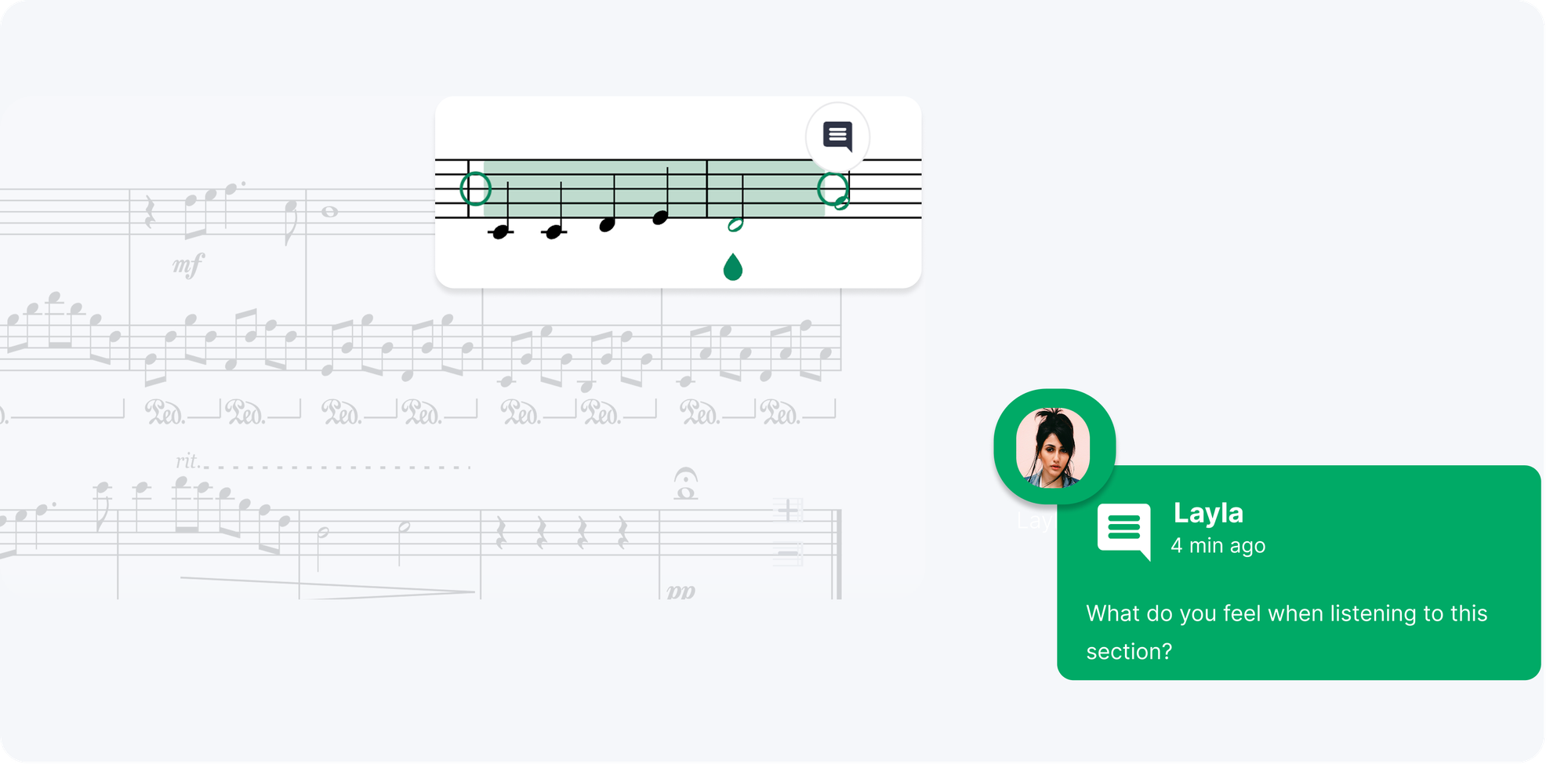

Feedback lived inside the score. Instead of writing comments in a separate document referencing measure numbers, she left timestamped comments directly on the notation – on the specific note, in the specific measure, at the moment of the issue. Students opened their returned submission and saw exactly what she was referring to.

Auto-grading handled the objective layer of theory exercises. The binary questions – did the intervals resolve correctly, were the voice leading rules followed, is the key signature right – were checked instantly. She reviewed results rather than replicating them manually.

What she did in those two days that previously would have taken nine was not administrative. It was musical. She was reading student work and responding to what students were actually trying to do.

One seventh-grade student who had been submitting simple one-voice melodies for the previous semester because anything more complex was too technically demanding to notate by hand submitted a four-part chorale arrangement. The platform removed the barrier between what the student could hear and what she could turn in. The teacher noticed. The feedback was specific. The student came back with a revision.

That is what composition grading is supposed to produce.

The Practical Workflow: How to Grade Compositions Faster Without Grading Them Worse

Here is the workflow that works for music teachers grading composition at scale. You can adapt this to your current tools, though some steps require a platform that supports in-score feedback.

Step 1: Standardize the submission environment before students start

The single biggest time sink in composition grading is format chaos. Eliminate it before the assignment begins by requiring students to work inside a specific environment. If you use Flat for Education, students compose directly in the platform and submit through it. If you do not, require a specific export format (MusicXML or PDF from a named tool) and a specific submission channel. Make this non-negotiable.

Step 2: Separate your first pass from your feedback pass

Do not try to write feedback while you are forming your initial impression of a piece. Read through the entire submission first. Hear the playback. Form a judgment. Then return to write feedback. This separation makes feedback more coherent and takes less time than trying to react and write simultaneously.

Step 3: Address the objective layer first

Check technical accuracy against your rubric before engaging with the subjective elements. This anchors the grade and prevents the halo effect -- the tendency to let a particularly beautiful melody forgive voice-leading errors that should be corrected.

Step 4: Write one specific comment on the strongest moment

Before you write a single correction, find something specific to acknowledge. Not "great job." Something like: "The arrival at the dominant in bar 8 works especially well because you prepared it with a passing tone in the soprano -- that approach tone is doing real work." This orients the student to what to protect while they respond to corrections.

Step 5: Limit corrections to three and prioritize by impact

You can see fifteen things that could be better. Write three. The most impactful three, ordered by their effect on the fundamental quality of the piece. Students who receive fifteen corrections address zero of them because the cognitive load is too high. Students who receive three specific, prioritized corrections often address all three and improve.

Building Composition Grading Into Regular Practice

The larger shift that better tooling makes possible is frequency. When grading composition takes 13-21 hours per month, it becomes a semester-ending event. When it takes 4-6 hours, it becomes regular practice.

And regular composition practice with timely, specific feedback is the difference between students who learn to compose and students who complete one assignment per semester and retain nothing.

Music teachers know this. The logistics have prevented it.

Sarah Chen runs composition assignments monthly now. Not because she has more time than she did before. Because she is not spending her time on things a platform should handle.

Her gradebook, for the first time, reflects what actually happens in a music classroom. Not just written theory tests. Not just semester-end projects. Regular, documented, progressive composition work with feedback that connects directly to measurable improvement.

That is what "I'll add a composition unit" is supposed to mean when you first say it.

Frequently Asked Questions

How do you grade music composition assignments fairly? Fair composition grading requires a clear rubric that separates objective criteria (voice leading rules, required structural elements, key signature adherence) from subjective criteria (melodic quality, harmonic sophistication, creative risk). Objective criteria should be worth more at lower grade levels and less at higher ones, as technical accuracy becomes expected and compositional voice becomes the primary teaching target. Consistency across students is best achieved by grading one category across all submissions before moving to the next, rather than grading each submission completely before moving on.

What should a music composition rubric include? A strong composition rubric for school settings typically includes five categories: technical accuracy (objective rule-following), structural integrity (form and architecture), melodic quality (direction, tension, release), harmonic awareness (chord choices and rhythm), and creative risk or voice (intentional decisions beyond the minimum requirements). Each category should have clearly described criteria at each performance level so students understand what distinguishes a 3 from a 4 before they submit.

How long should it take to grade a student's composition? With specific feedback, a single composition review should take 8 to 15 minutes depending on the complexity of the assignment and the depth of feedback required. The larger time cost in most music teacher workflows is not the review itself but the surrounding logistics: collecting submissions, converting formats, and delivering feedback through disconnected channels. Purpose-built platforms like Flat for Education that handle submission and feedback inside the same environment reduce total grading time significantly.

How do you give feedback on student compositions? The most effective composition feedback is specific, measure-referenced, and balanced between acknowledgment and correction. Identify the strongest moment in the piece first with a specific observation rather than generic praise. Then limit corrections to three, ordered by their impact on the fundamental quality of the composition. Feedback that lives inside the score -- as timestamped comments on specific measures -- is more actionable for students than feedback written in a separate document.

Can music composition assignments be auto-graded? The objective layer of composition assignments -- voice leading rule-checking, interval identification, key signature adherence, rhythmic accuracy -- can be auto-graded by platforms designed for music education. Flat for Education's auto-grading handles this layer instantly, giving students feedback before they even submit. The subjective layer, including melodic quality, harmonic sophistication, and creative voice, requires teacher judgment and cannot be meaningfully automated. The combination of auto-grading for objective criteria and teacher feedback for subjective ones is the most efficient model for music composition assessment.

How do you handle composition submissions that arrive in different formats? Format inconsistency is one of the primary time costs in music composition grading. The most effective solution is to standardize the submission environment before the assignment begins by requiring students to work inside a specific platform. Browser-based tools like Flat for Education eliminate format variation entirely because students compose and submit inside the same environment. If multiple formats are unavoidable, establishing a required export format (MusicXML is the standard interchange format for notation software) and a single submission channel minimizes conversion overhead.

How do you grade composition assignments at scale across multiple class sections? For teachers managing multiple sections, the most important structural change is separating the submission layer from the feedback layer. Platforms that collect submissions in a single organized dashboard – with student names attached, organized by class – eliminate the file management overhead that makes scale grading time-consuming. Grading one rubric category across all submissions before moving to the next, rather than completing each submission before moving on, also improves speed and consistency at scale. Teachers managing 90 or more students report reducing composition grading time by more than 50 percent after moving from manual workflows to purpose-built platforms.

The Bottom Line

Grading music composition well is not a rubric problem. Every experienced music teacher knows what good composition looks like. The problem is the system around the rubric – submission chaos, disconnected feedback, format inconsistency, and the sheer logistics of returning meaningful notes to 90 students before the moment has passed.

When the system works, composition becomes what it should be: a regular, progressive practice with a feedback loop that makes students better composers over the course of a year. Not a semester-ending event that happens once and produces a grade nobody references again.

The rubric framework is above. The workflow is above. And if you want to see what it looks like when the logistics disappear entirely – when compositions arrive organized, feedback lives in the score, and objective criteria are checked before you even open the file – Flat for Education's 30-day free trial connects to your existing class roster in minutes.